Maximum likelihood estimation, Loss functions, and regularization

In this blog, we will discuss the root of the loss functions in most regression problems, which is the maximum likelihood estimation (MLE). We will start by introducing the concept of likelihood function and then derive the L2 loss function from the perspective of MLE. Finally, we will also briefly discuss the connection between MLE and regularization.

The likelihood function

In deep learning, we want to train a neural model that can reproduce the training data we have. From a probabilistic perspective, training data can be seen as a multivariate distribution, and we want to find a model that can approximate that distribution. The most common way to do this is through maximum likelihood estimation (MLE).

Give data samples $\mathbf{x}=(x_1, x_2, \ldots, x_n)$ and a model with parameters $\boldsymbol{\theta}$, we use $P(\mathbf{x}\mid \boldsymbol{\theta})=f(\mathbf{x};\boldsymbol{\theta})$ to denote the joint probability mass function of all data points given the parameters $\boldsymbol{\theta}$. On the other hand, if we fix the data samples $\mathbf{x}$, then $f(\mathbf{x};\boldsymbol{\theta})$ can be seen as a function of $\boldsymbol{\theta}$, i.e., $P(\boldsymbol{\theta}\mid \mathbf{x})$, which is called the likelihood function.

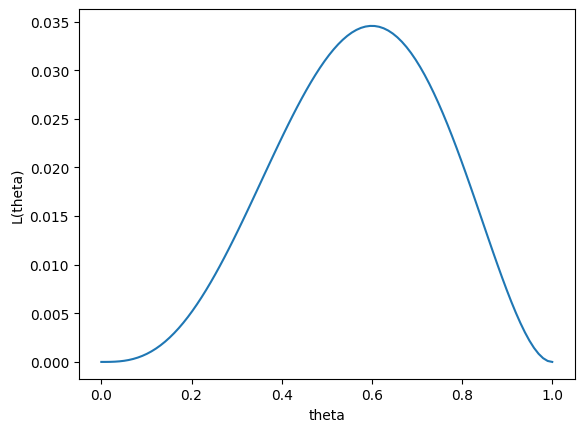

Here, we can give a simple and commonly used example to illustrate the concept of likelihood function. Suppose we have an unfair coin, and we use $\boldsymbol{\theta}$ to denote the probability of getting heads. If we know the value of $\boldsymbol{\theta}$, we can calculate the probability of getting a specific sequence of tosses. For example, the probability of getting $[H,H,T,H,T]$ in five tosses is $\boldsymbol{\theta}^3(1-\boldsymbol{\theta})^2$. On the other hand, if we dont know the value of $\boldsymbol{\theta}$, but we have observed the sequence $[H,H,T,H,T]$, then we can treat $\boldsymbol{\theta}$ as a variable and calculate the likelihood of observing that sequence for different values of $\boldsymbol{\theta}$. In this case, the likelihood function is $L(\boldsymbol{\theta}) = \boldsymbol{\theta}^3(1-\boldsymbol{\theta})^2$, which tells us how likely it is to observe the sequence $[H,H,T,H,T]$ for different values of $\boldsymbol{\theta}$. In fact, we can plot the likelihood function to visualize how it changes with different values of $\boldsymbol{\theta}$:

import matplotlib.pyplot as plt

import numpy as np

theta=np.linspace(0,1,100)

p=theta**3*(1-theta)**2

plt.plot(theta,p)

plt.xlabel('theta')

plt.ylabel('L(theta)')

plt.show()

From the plot, we can see that the likelihood function reaches its maximum value at $\boldsymbol{\theta} = 0.6$, which means that the most likely value of $\boldsymbol{\theta}$ given the observed data is 0.6.

Maximum likelihood estimation

The above example illustrates the essence of maximum likelihood estimation: we want to find the value of $\boldsymbol{\theta}$ that maximizes the likelihood function. From Bayesian rule, this is equivalent to finding the value of $\boldsymbol{\theta}$ that makes the observed data most likely under our model:

Maximize the likelihood function means we want

\[\mathop{\mathrm{argmax}} P(\boldsymbol{\theta}\mid \mathbf{x})\]By applying Bayes’ theorem, we can rewrite this as:

\[\mathop{\mathrm{argmax}} \frac{P(\mathbf{x}\mid \boldsymbol{\theta})P(\boldsymbol{\theta})}{P(\mathbf{\mathbf{x}})}\]Here, since $P(\mathbf{x})$ is constant with respect to $\boldsymbol{\theta}$, we can ignore it in the optimization process. Meanwhile, if we don’t have any prior knowledge about $\boldsymbol{\theta}$, we can assume a uniform prior, which means $P(\boldsymbol{\theta})$ is also constant (In regularization section, we will discuss the situation if we know this prior ). Therefore, the optimization problem simplifies to:

\[\mathop{\mathrm{argmax}} P(\mathbf{x}\mid \boldsymbol{\theta})\]Negative Log-Likelihood

Although we can directly maximize the likelihood function, it is often more convenient to minimize the negative log-likelihood instead, i.e., \(\mathop{\mathrm{argmin}} -\log P(\mathbf{x}\mid \boldsymbol{\theta})\)

Minimizing the negative log-likelihood is equivalent to maximizing the likelihood, so we can achieve the same goal while avoiding numerical issues. The reason why we usually use negative log-likelihood is that the likelihood function can be very small, especially when we have a large number of data points, which can lead to numerical instability. For example, if $\boldsymbol{\theta}=0.5$ in the coin toss example, the likelihood of observing a specific sequence of 100 tosses would be $0.5^{100}$, which is an extremely small number that can cause underflow issues in computations. By taking the logarithm, we can transform the product of probabilities into a sum, which is more numerically stable. In the coin toss example, the log-likelihood would be $100 \log(0.5)=-30.10$, which is a much more manageable number to work with.

Meanwhile, minimizing the negative log-likelihood is also more convenient for gradient descent. If the data points are independent and identically distributed (i.i.d.), the likelihood function can be expressed as a product of individual probabilities: \(P(\mathbf{x}\mid \boldsymbol{\theta}) = \prod_{i=1}^n P(x_i\mid \boldsymbol{\theta})\) Thus, when we calculate the gradient of the likelihood function with respect to $\boldsymbol{\theta}$, we would need to apply the product rule, which can be complex and computationally expensive. However, when we take the logarithm, the product turns into a sum: \(\log P(\mathbf{x}\mid \boldsymbol{\theta}) = \sum_{i=1}^n \log P(x_i\mid \boldsymbol{\theta})\) This makes the gradient calculation much simpler, as we can now compute the gradient of each term in the sum independently and then sum them up, which is computationally more efficient.

Mean Squared Error

Although negative log-likelihood provides a theoretically sound framework for deep learning, in practice, we often use specific loss functions that are derived from the negative log-likelihood for different types of problems. For example, in regression problems, we commonly use mean squared error (MSE) as the loss function.

To see how MSE can be derived from negative log-likelihood, let’s consider a simple regression problem where we have a dataset of input-output pairs $(x_i, y_i)$, and we want to learn a function $f(x_i; \boldsymbol{\theta})$ that maps inputs to outputs. The key assumption in this case is that the learned function is corrupted by Gaussian noise, which means that the observed outputs $y_i$ are generated from the true function values $f(x_i; \boldsymbol{\theta})$ plus some Gaussian noise $\epsilon_i$:

\[y_i = f(x_i; \boldsymbol{\theta}) + \epsilon_i\]where $\epsilon_i \sim \mathcal{N}(0, \sigma^2)$ is the Gaussian noise with mean 0 and variance $\sigma^2$. Under this assumption, the likelihood of observing the data given the parameters $\boldsymbol{\theta}$ can be expressed as

\[P(\mathbf{y}\mid \mathbf{x}, \boldsymbol{\theta}) = \prod_{i=1}^n P(y_i\mid x_i, \boldsymbol{\theta}) = \prod_{i=1}^n \frac{1}{\sqrt{2\pi\sigma^2}} \exp\left(-\frac{(y_i - f(x_i; \boldsymbol{\theta}))^2}{2\sigma^2}\right)\]Taking the logarithm of the likelihood function, we get the log-likelihood:

\[\log P(\mathbf{y}\mid \mathbf{x}, \boldsymbol{\theta}) = -\frac{n}{2} \log(2\pi\sigma^2) - \frac{1}{2\sigma^2} \sum_{i=1}^n (y_i - f(x_i; \boldsymbol{\theta}))^2\]Since the first term is constant with respect to $\boldsymbol{\theta}$, we can ignore it when optimizing. Therefore, maximizing the log-likelihood is equivalent to minimizing the second term, which is proportional to the squared error: \(\mathop{\mathrm{argmin}} \sum_{i=1}^n (y_i - f(x_i; \boldsymbol{\theta}))^2\) Usually, we take the average of the squared error to get the mean squared error (MSE): \(\mathop{\mathrm{argmin}} \frac{1}{n} \sum_{i=1}^n (y_i - f(x_i; \boldsymbol{\theta}))^2\)

On the other hand, if we assume that the noise follows a different distribution, such as Laplace distribution:

\[P(\epsilon_i) = \frac{1}{2b} \exp\left(-\frac{|\epsilon_i|}{b}\right)\]Then, we would derive a different loss function, which is mean absolute error (MAE):

\[\mathop{\mathrm{argmin}} \frac{1}{n} \sum_{i=1}^n |y_i - f(x_i; \boldsymbol{\theta})|\]Regularization

In practice, we often add a regularization term to the loss function to prevent overfitting and improve generalization. Regularization can be seen as adding a prior distribution over the parameters $\boldsymbol{\theta}$ in the maximum likelihood estimation framework.

If we consider a prior distribution $P(\boldsymbol{\theta})$ over the parameters, the negative log-likelihood with regularization can be expressed as:

\[\mathop{\mathrm{argmin}} -\log P(\mathbf{x}\mid \boldsymbol{\theta}) - \log P(\boldsymbol{\theta})\]If $P(\boldsymbol{\theta})$ is a Gaussian distribution, i.e., $P(\boldsymbol{\theta}) = \mathcal{N}(0, \sigma^2 I)$, then the regularization term becomes an L2 regularization: \(- \log P(\boldsymbol{\theta}) = \frac{1}{2\sigma^2} \|\boldsymbol{\theta}\|^2 + \text{constant}\) Thus, the loss function with L2 regularization can be written as:

\[\mathop{\mathrm{argmin}} -\log P(\mathbf{x}\mid \boldsymbol{\theta}) + \lambda \|\boldsymbol{\theta}\|^2\]where the first term is MSE or MAE loss as derived above, and the second term is the L2 regularization with $\lambda$ being the regularization strength. Similarly, if we assume a Laplace prior over the parameters, we would derive an L1 regularization term, which encourages sparsity in the parameters.

References

[1] Dive into Deep Learning, Chapter 22.7, https://d2l.ai/chapter_appendix-mathematics-for-deep-learning/maximum-likelihood.html

[2] Why Mean Squared Error and L2 regularization? A probabilistic justification, Avital Oliver, http://aoliver.org/why-mse

Enjoy Reading This Article?

Here are some more articles you might like to read next: